Agreement among 4 Raters:

Weighted

Analysis

of the Distribution of Raters by Subject and Category

Input Data

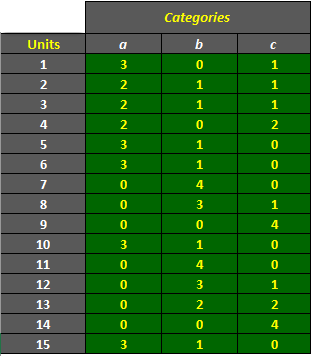

The dataset shown below is included in one of the example worksheets of AgreeStat 360, and can be downloaded from that program. It shows the distribution of 4 raters by unit (or subject) and category. That is, 4 raters assigned each of the 15 units to one of the 3 categories a, b, and c, and the ratings were organized in the form a distribution of raters. AgreeStat360 can process your ratings data in this format as well.

Analysis with AgreeStat/360

To see how AgreeStat360 processes this dataset to generate various agreement coefficients, please play the video below. This video can also be watched on youtube.com for more clarity if needed.

Results

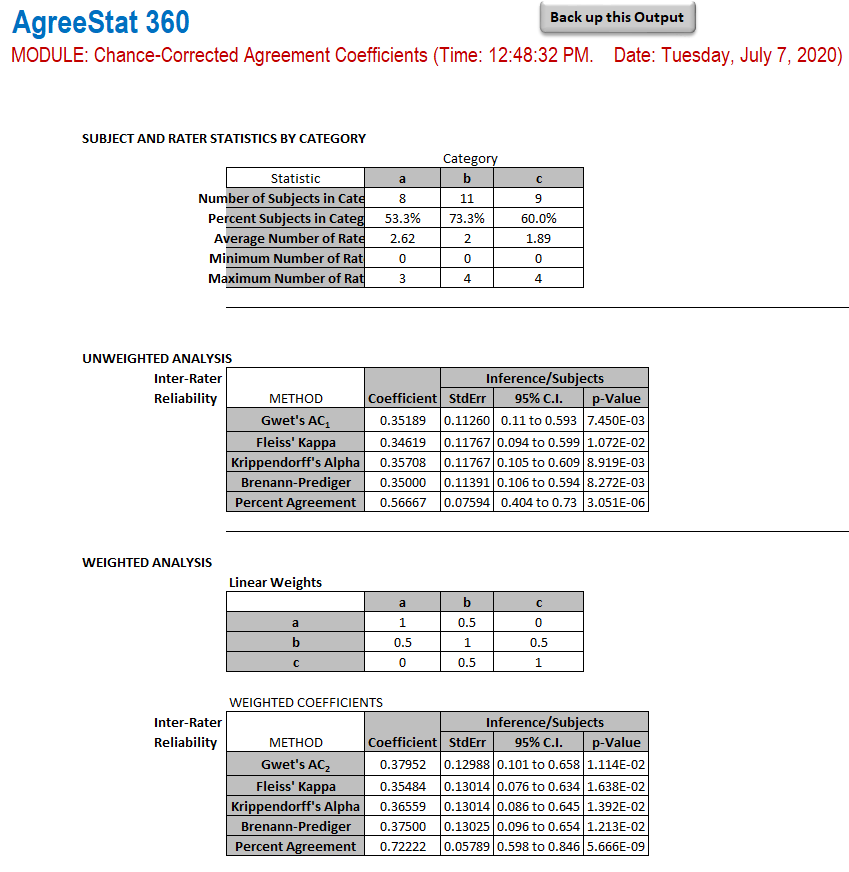

The output that AgreeStat360 produces is shown below. It contains 4 parts:

-

Summary data:

The first part of this output shows some general summary statistics. -

Unweighted analysis:

A total of 5 agreement coefficients, among which are Fleiss' kappa, Gwet's AC1, and more. Each agreement coefficient is associated with a standard error, the 95% confidence interval and the p-value. -

Weights: Here is the specific set of weights used to compute the weighted agreement coefficients.

-

Weighted analysis: The weighted versions of the same 5 agreement coefficients are presented in this section of the output, along with their associated standard errors, 95% confidence intervals and p-values.