4-Rater Agreement: Unweighted Analysis of Raw Scores

Input Data

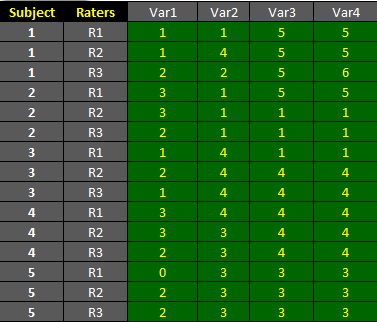

The dataset shown below is included in one of the example worksheets of AgreeStat 360 and can be downloaded from the program. It contains the ratings associated with 4 variables that 3 raters assigned to 5 subjects. This data is organized in the long format as shown in the table below.

The objective is to compute unweighted agreement coefficients separately for each of the 4 variables using AgreeStat360 for Excel/Windows, and to benchmark the agreement coefficients using the Landis-Koch benchmark scale. More on agreement coefficients benchmarking can be found here.

Analysis with AgreeStat/360

To see how AgreeStat360 processes this dataset to compute various agreement coefficients, please play the video below. This video can also be watched on youtube.com for more clarity if needed.

Results

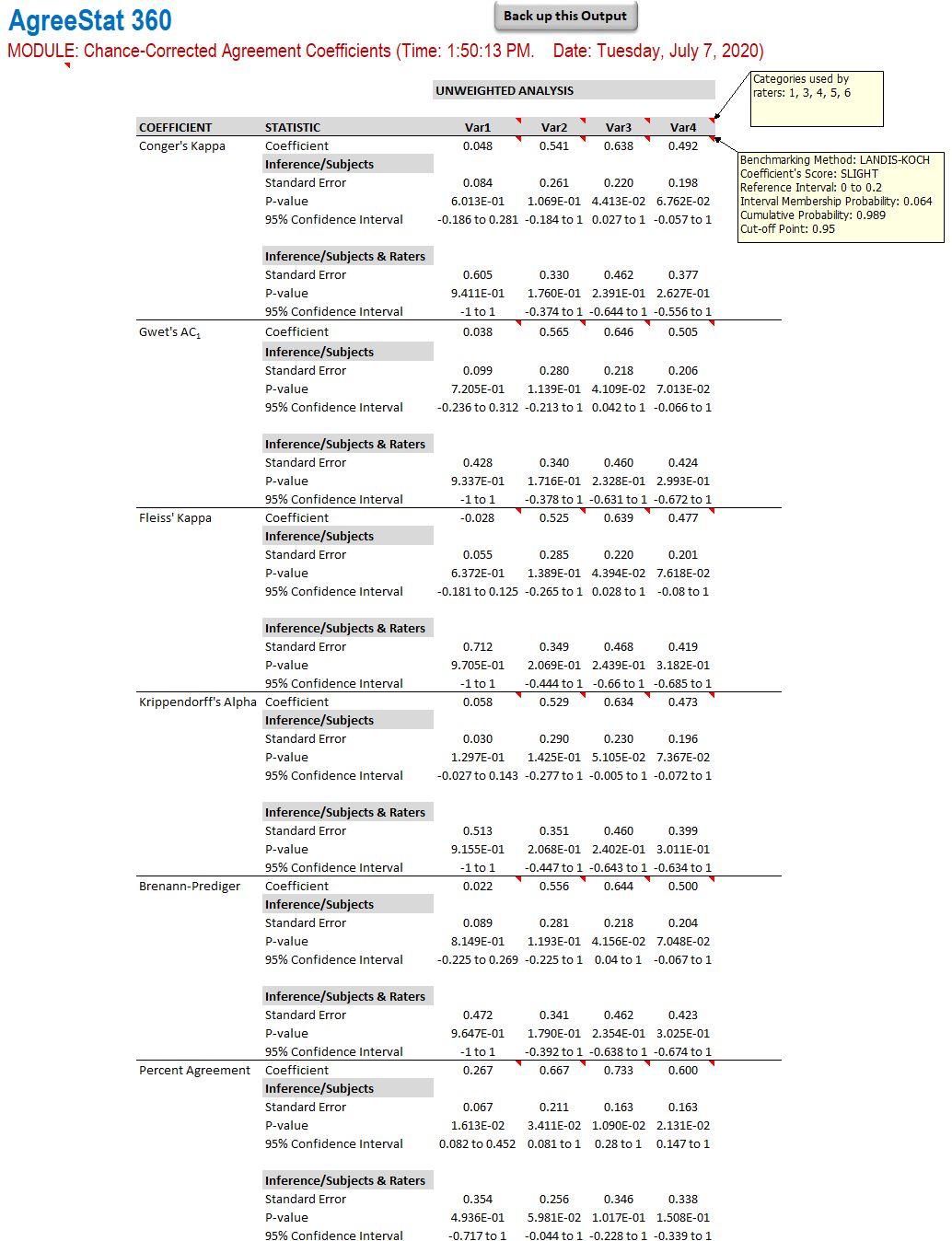

The output that AgreeStat360 produces is shown below:

-

Variables: The 4 rightmost columns of the ouput table contain the various statistics for the 4 variables being analyzed. Each variable name has comments showing the specific scores the raters have used.

-

Unweighted analysis: Six agreement coefficients are calculated, including Conger's kappa, Gwet's AC1, and more. Each agreement coefficient is associated with precision measures calculated with respect to the subject sample (i.e. raters are fixed and do not constitue a source of variation), and with respect to both the subject and rater samples considered as 2 independent sources of variation affecting the agreement coefficients. These precision measures are the standard error, the 95% confidence interval and the p-value.

You will note in the righmost column associated with "Var4" that each agreement coefficient estimate has a comment showing qualification of its magnitude, the associated intervale and the various probabilities. If needed, please review the procedure of benchmarking agreement coefficients.